In this Article, we shall examine the High-Availability solutions in SQL Server 2005 and which solution can be well suited for a certain scenario. Though they are all part of High-Availability not all are feasible in a particular scenario. This article tries to evaluate the 4 HA features, their scope, Advantages and Disadvantages and it is does not explain the mechanism how they function or any other technical implementation details.

Failover Clustering

The reason I start with Failover Clustering is, it provides Availability at an Instance level and most of the Production Instances are implemented using SQL Server Failover Clustering.

Scope

Instance Level (All Databases in a single Instance)

Core Functionality

The main functionality of Failover Clustering is to minimize the downtime of the SQL Server on an instance level.

Requirements

The Hardware needs to be certified for Implementing Failover Clustering. Moreover all the participating Nodes in the Cluster need to be of Similar Hardware Configuration.

Advantages

1. Provides Automatic Failover of the SQL Instance to the Participating Node (Passive Node), hence no manual configuration is required when failover occurs.

2. Is not related to the Recovery model of the Databases.

3. Application that rely on more than one Database of an Instance, do not need changes as all the Database are available upon failover, where as in other 3 HA features, the dependent databases also have to be configured for availability.

Dis-advantages

1. Purely relying on Failover Clustering is not suggested since a Data Disk Failure would result in not having another copy of the Databases to Fallback immediately.

2. There is no redundant copy of the Database; hence if a Reporting Application requires the same copy of the Transactional Database, then it has to rely on the existing Database impacting the performance of the main application.

3. Cannot be implemented with distributed systems, the Nodes are required to be within 100 Miles ( I remember reading in BOL )

Database Mirroring

Scope

Individual Database Level.

Core Functionality

The main functionality of Database Mirroring is to provide a mirrored set of the Database for immediate failback.

Requirements

Does not require a signed or certified Hardware and can be implemented on standby Server which can be of a different configuration unlike Failover Clustering.

Advantages

1. Supports immediate and automatic failover of applications using ADO.Net and SQL Native Client, using Client-side Redirect.

2. Supports multiple modes of configuration namely, High Availability Mode, High Protection and High Performance Mode. Each mode has its advantages, providing an option to choose accordingly based on requirement in hand.

Dis-advantages

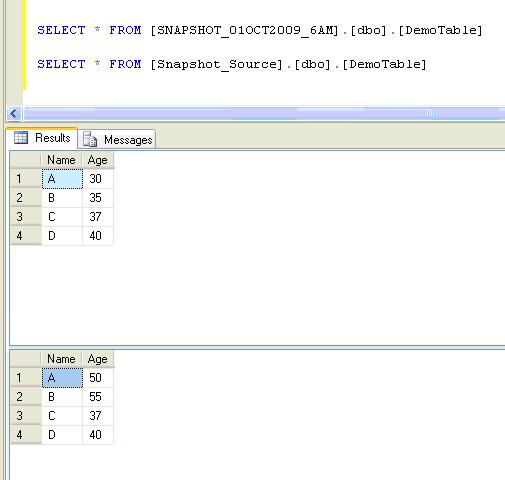

1. Database redundancy is not provided for Read-Only purpose, but a workaround way using Database Snapshots on the Mirrored Database is suggested in BOL. It has to be remembered that Database Snapshots are limited to Enterprise Edition of SQL Server 2005 (Not sure of Database Snapshots on the Mirrored Database in SQL Server 2008)

2. Can be implemented only with Databases having FULL Recovery model, hence databases with other recovery models cannot be used in Database Mirroring.

Log Shipping

Scope

Individual Database Level.

Core Functionality

Provides Database availability by shipping transaction logs onto single / multiple Secondary servers.

Requirements

Does not require Signed Hardware and does not require Hardware that is Similar to the Primary Server.

Advantages

1. Using immediate restore option, we can setup almost an exact copy of the production system at the Secondary Server.

2. Delayed Restore; using this option you setup a secondary Server with the transaction Logs shipped but are not restored immediately, this option would be highly helpful, if incase of Data Corruption / Loss because of a User accidentally deleting / dropping / modifying important Data. If acted immediately, you should be able to recover the Data from the Database on the secondary Server.

3. Multiple Secondary Databases can be setup for a single Primary Database, providing the option to use one copy for DR and another for a reporting kind of use (The Users need to be disconnected when the Logs are restored and that can be done Automatically by SQL Server), so restoring Job can be scheduled for every 6 Hours so as not to interrupt reporting users frequently.

Dis-advantages

1. Automatic Redirection / Failover of the application is not possible.

2. When compared to Database Mirroring or Transactional Replication there is a slight delay in synchronizing the Database at the secondary server.

3. Can be implemented only with Databases having FULL/Bulk-Logged Recovery model.

4. Sometimes Entire Database is not required for the application when coming back immediately from a Disaster. Let me explain, a Database has multiple years (let’s say 7) of transactional Data and you may not need the entire 7 years of Data when disaster strikes, the most recent few years (may be 2 or 3) is good enough to start immediately. OR a subset of the Database is required for a Marketing application; you cannot provide it using Log Shipping.

Replication

Scope

Database Object Level

Core Functionality

Provides a set of tools that enable copying of the Source Data to multiple Distributed Servers for High Availability and Scalability. There are 3 types of Publications available and can be used based on the data change frequency or direction of Data flow (updateable and Merge Replications).

Requirements

Designated Hardware Servers are not required.

Advantages

1. Provides redundancy of Database, giving a choice to use one system for reporting and another for DR or Hot standby etc.

2. Can be used to build Scale-Out Implementation by using Peer-to-Peer Transactional Replication.

3. Can be used to setup a Reporting / Data Warehousing System which needs only some part of the Source Database, this can be achieved using Horizontal and Vertical filtering of Data.

4. Oracle Database can be used as a Publisher.

5. Independent of the Recovery Model of the Database, hence any database can be published.

Dis-advantages

1. Automatic Failover of the application is not possible, the application has to be coded / changed to use the standby system.

2. Many Table related properties, such as Index Partition Schemes, Check Constraints and Non Clustered Indexes etc are not propagated to the subscribers by default; they need to be configured when publishing.

3. Data Conflict can occur when Merge Replication is configured and sometimes may turn out to be very complex to solve the conflicts.

Conclusion:

Each of the high availability features presents a way to have Database (or Data) availability, and at the same time cannot be used in all scenarios. It is through proper understanding and planning, one or more than one of these features are used in order to achieve the desired goal or setup a DR Environment.

Do you like this site? Like our FB page @ Facebook.com\LearnSQLWithBru to know when there is new content.

–Bru Medishetty